AI Agents Development Services for Businesses Operating at Scale

- Leanware Editorial Team

- 10h

- 14 min read

You already know automation is valuable. You've probably tried at least one platform to prove it. What happened next is where most companies at the $5M to $50M stage land: the platform handled 70% of the workflow, then stopped. The remaining 30% involved your specific approval logic, your CRM field naming, or an edge case the tool was never designed to know about. So the workflow still requires a human in the loop, the investment didn't pay off the way you modeled it, and now you're evaluating whether to try again or move on.

Custom AI agent development is the answer to that specific problem. It is not a different flavor of the same category of tool. It is a fundamentally different approach: software built around your workflows, your systems, and your decision logic, deployed in production and maintained by the team that built it.

Let’s see how that works, what it costs, and how to evaluate whether your problem is a good fit.

What Are AI Agents Development Services?

An AI agent is software that can interpret inputs, reason through them, and take action without requiring human decisions at every step. That’s what distinguishes AI agents from traditional automation tools and standard chatbots. Chatbots mainly respond to prompts, while rule based automation follows predefined logic. AI agents, on the other hand, can adapt to changing conditions, work with unstructured data, and make context aware decisions in workflows that are not entirely predictable.

AI agent development services focus on designing and building these systems around the unique operational needs of a business. That includes your data sources, business rules, integrations, workflows, and exception handling requirements. Instead of delivering a universal tool, the goal is to create an AI agent that can operate effectively within your existing systems and perform meaningful business tasks in real world conditions.

How AI Agents Differ from Traditional Automation

Rule based automation, including most RPA systems, works reliably when inputs are consistent and processes stay predictable. But when formats change, APIs shift, or unexpected exceptions appear, those workflows often break and require manual fixes. That is because rules engines are designed to follow predefined logic, not interpret context.

AI agents add a reasoning layer on top of execution. Instead of relying only on fixed rules, they can interpret unfamiliar inputs, evaluate context, and adapt their decisions in real time. If a document arrives in a new format or an approval requires judgment beyond predefined conditions, the agent can often handle it without defaulting to rejection or escalation.

This is where many businesses begin to see the limitations of platforms like Lindy, Avoca, Compass AI, or Microsoft Copilot. The challenge usually is not automation itself. It is handling the business specific nuance and ambiguity that fixed workflows cannot account for.

The Role of LLMs, RAG, and Multi Agent Frameworks

A production ready AI agent is built on three components working together.

A large language model (LLM) handles language understanding and reasoning.

Retrieval augmented generation (RAG) grounds the agent's outputs in your actual business data rather than model memory, which is the primary engineering control for hallucination risk.

An orchestration framework manages multi step task execution, state persistence across interactions, and coordination across multiple agents when the workflow requires it.

Leanware builds stateful agents using LangGraph and Pydantic AI as the orchestration layer, with models from OpenAI and Anthropic selected based on the performance and cost requirements of each use case. The orchestration layer is what makes agents reliable in production rather than impressive in a demo environment.

Why Businesses Are Investing in AI Agents Development

For companies in the $5M to $50M revenue range, operational capacity often becomes a bigger constraint than headcount. Teams spend increasing amounts of time on repetitive knowledge work, high volume customer interactions, and analysis that takes longer than the decisions it is meant to support. That is where AI agents become valuable.

The business case is relatively simple: if a task is repetitive, has clear inputs, and a well defined outcome, it can often be handled by an AI agent at scale. That allows teams to spend more time on work that requires human judgment, strategy, or relationship management.

Key Business Problems AI Agents Solve

The strongest AI agent use cases are usually operational and highly specific: following up with leads who submit forms but do not book calls, responding to customer reviews quickly and consistently, triggering re engagement campaigns based on CRM inactivity, extracting and validating invoice data across inconsistent formats, and summarizing contracts, market research, or recurring performance data into structured reports.

These workflows are repetitive, rules driven, and outcome oriented, which makes them well suited for agent automation.

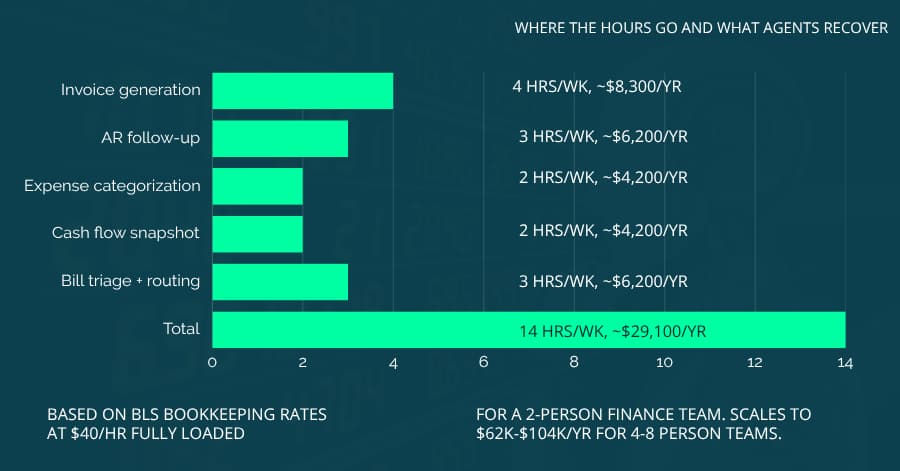

ROI and Efficiency Gains from Agentic Systems

The financial case for AI agents is becoming easier to quantify, though the numbers are worth reading carefully rather than applying as benchmarks.

For sales development, AI SDR systems book meetings at a fraction of human SDR cost, typically an order of magnitude lower per meeting based on 2026 industry data. The qualification rate gap is real: AI booked meetings convert at lower rates than human sourced ones, and how much that narrows the net economics depends on your deal size and average contract value. For high volume, lower ACV products the math typically holds; for enterprise sales where meeting quality matters more than meeting volume, the comparison requires closer scrutiny of your specific numbers.

For carrier invoice reconciliation in 3PL operations, or purchase order matching against bills of materials in manufacturing, structured extraction agents consistently reduce processing cost and cycle time by a significant margin compared to manual methods. The specific savings depend on invoice volume, format complexity, and how many systems require reconciliation. The ROI case for any specific workflow should be validated during scoping rather than assumed from industry figures.

Results vary by implementation quality, workflow complexity, and the state of your underlying data. These figures represent well implemented systems built on clean data, not default platform configurations.

How Leanware Delivers

Leanware operates as a managed service rather than a code delivery engagement. Production AI agents require ongoing refinement as business conditions change: model updates affect behavior, integrations evolve, and new edge cases surface in real usage. The managed service model means Leanware stays responsible for all of that after launch, not just during the build.

Managed Service, Setup and Monthly Subscription

Every engagement starts with a setup fee covering discovery, architecture, development, and integration. After launch, a fixed monthly subscription covers all API costs, compute, hosting, model orchestration, and continuous refinement, with no separate cloud bill, no model licensing invoice, and no hidden infrastructure cost. One monthly number covers everything.

What "managed" actually means in practice: continuous evaluation of the agent's performance against the baseline established in the Assessment, prompt and model refinement on a defined cadence, drift monitoring as conditions change, escalation triggers when edge cases exceed defined thresholds, and bundled API, hosting, and model costs in the monthly fee. Leanware stays on retainer throughout the engagement. When an integration changes, when a model update affects behavior, or when a new edge case emerges in production, that work is handled within the subscription. You define what the agent should achieve. The team handles everything required to keep achieving that.

How Engagements Are Scoped

Engagement scope follows directly from what the Assessment finds: the number of workflow steps involved, the systems that need to integrate, the complexity of the exception handling logic, and the performance baseline against which ROI will be measured. Pricing reflects those variables, not a pre assigned tier. The Assessment is where scope gets defined against your actual workflow, and it produces a recommended engagement structure with projected costs before development begins.

The reference cases on the marketing site give ballpark anchors for similar workflow types. Those, combined with the Assessment output, are what carry the buyer's cost expectation into the build. Scope determined before an engineer has seen the workflow is not a precision estimate, and Leanware does not publish it as one.

Measurement Window

Engagements are designed around a measurement window. Month four ROI is calculated against the baseline established in the Assessment, which is the point at which deployment, calibration, and the first iteration cycle have all run. Continued refinement happens beyond that point.

Transparent Pricing

Pricing reflects LATAM delivery economics. Leanware's engineering team operates from Latin America with full US time zone overlap. The cost difference relative to US or EU agency rates is structural, and it is passed to clients directly.

AI ROI Assessment: The Entry Point

Before any build begins, Leanware offers an AI ROI Assessment: a two week, $4,500 engagement that produces a baseline audit of your current operations, an opportunity map of three to five ranked use cases, ROI projections per workflow, and a 90 day roadmap with a recommended engagement scope expressed in the variables that actually determine cost, including steps, integrations, and target savings. The $4,500 is credited 100% toward the build if you proceed within 30 days.

The Assessment is also where scope risk gets managed. Rather than entering a build and discovering a scope mismatch six weeks in, the Assessment determines what the engagement actually requires before development starts. That scoping step is the primary risk reduction mechanism in custom AI work, and it is worth taking seriously before any development budget is committed.

AI Agent Types and Use Cases by Industry

The categories below cover the deployment patterns Leanware builds most frequently. They are organized by function rather than industry because the underlying agent architecture for a customer follow up workflow in home services is not meaningfully different from one in real estate. The industry applications section after covers vertical specific considerations.

AI Agents for Operations and Process Automation

Platforms like Microsoft Copilot handle the structured, high volume end of operational automation well. Where they stop is at your specific approval logic, your cross system reconciliation rules, and the exception handling that makes your operation different from the template.

Operational agents pick up from that point, managing scheduling, approval routing, report generation, and cross department coordination in environments where the edge cases are where the real cost lives.

Because agents run at consistent quality regardless of team bandwidth, they are most valuable in environments where volume spikes are predictable and manual coverage becomes unreliable at peak.

AI Agents for Customer Experience and Support

Conversational agents handle inbound inquiries, escalation routing, and proactive outreach workflows. In operational terms, that means responding to every inquiry the same day, following up on every unanswered quote, and sending re engagement messages to lapsed customers on a defined schedule.

The operational advantage is consistency: a human team at capacity handles the high priority interactions, and the agent handles the volume that would otherwise be missed or delayed.

AI Agents for Data, Research, and Decision Support

Research agents gather, synthesize, and present information that currently requires manual effort to compile. Competitive monitoring, contract review summaries, financial performance digests, and internal knowledge retrieval are all strong candidates. The common thread is structured output produced on a recurring basis from sources the team already maintains but doesn't have consistent time to synthesize.

AI Agents for Software Development and DevOps

Engineering teams use agents for code review assistance, automated test generation, CI/CD pipeline monitoring, and incident response triage. These agents reduce the cognitive load on engineers during high pressure moments and help maintain code quality standards without adding review bottlenecks to the delivery cycle.

Industry Applications

3PL and B2B Distribution: agents that handle shipment status queries, exception flagging, carrier coordination, and freight document processing without manual intervention at each step.

Insurance MGAs: quote intake agents, policy renewal outreach, document classification, and compliance check automation. The documentation volume in MGA operations makes structured extraction and routing agents particularly high value from a cycle time standpoint.

Job Shop Manufacturing: scheduling agents that coordinate job status across ERP, CRM, and shop floor systems, and surface capacity conflicts before they become schedule delays.

Real Estate: lead qualification and routing agents for multi office brokerages, where leads from Zillow, the brokerage website, and partner sites need to be routed across agents and offices through a custom CRM. Single agent residential brokerage workflows are typically better served by verticalized SaaS platforms at standard pricing.

Home Services: for multi truck, multi region operators with custom dispatch logic, agents handle coordination across locations, fleet, and customer touchpoints, including review response, re engagement campaigns for lapsed customers, and technician scheduling confirmation. Single location operators are typically better served by verticalized SaaS like Avoca or ServiceTitan AI.

Financial Services: K1 and financial statement extraction, SEC/FINRA audit prep, and compliant prospect outreach for RIAs. For regulated environments, audit trail logging and human review gates are designed in from the start, with output documentation structured for compliance review.

The AI Agents Development Process: How We Build, Run, and Continuously Improve It

Leanware owns the full engagement lifecycle from problem scoping through production operation. Clients are not expected to manage infrastructure, monitor model performance, or handle maintenance between updates. The process below shows how that end to end ownership is structured in practice, including the continuous improvement loop that runs after launch.

Discovery and Use Case Definition

The discovery phase maps the real workflow through stakeholder conversations, system inventory, and identification of where the highest friction handoffs occur. Success metrics and scope boundaries are agreed on before development starts. The objective is ensuring the right problem is being solved, not just the most accessible version of it.

Prototype and Proof of Concept

Before full development investment, a focused prototype tests the core logic against real world inputs. This phase validates accuracy, surfaces edge cases, and confirms that the agent can reliably handle the task under realistic conditions. Development scales only after the prototype demonstrates the problem is solvable at the required performance level.

Iterative Development and Feedback Loops

Development runs in short cycles with regular demos and integrated feedback. As the agent encounters real usage, accuracy improves, exception handling sharpens, and decision logic aligns more closely with operational reality. The team manages and refines the system continuously, so clients receive an agent that improves over time rather than a static build that gradually drifts from its intended behavior.

Integration, Infrastructure, and Reliability

The agent connects to the systems it needs to act on: CRMs, shared drives, ERPs, external APIs. Data handling is designed to meet the security requirements of the operating environment. All hosting, compute, and model orchestration is managed by Leanware. Service commitments in the contract reflect actual operational standards, not aspirational language added during sales.

Launch, Operation, and Continuous Improvement

Launch is the beginning of the operational engagement, not the conclusion of it. After deployment, the team continues monitoring performance, refreshing knowledge bases, applying model updates, and optimizing cost per operation as usage scales. Clients interact with the outcomes the agent produces, not with the infrastructure that produces them.

Technology Stack and Tools We Work With

Stack decisions are where technical credibility in AI development is actually proven in practice. The following reflects what Leanware deploys in production.

LLM Providers and Foundation Models: Leanware deploys models from OpenAI and Anthropic in production environments. Model selection depends on the performance, latency, and cost requirements of the specific use case rather than vendor preference. Open source models are also evaluated when they provide sufficient capability with lower operating costs.

Agent Frameworks and Orchestration Layers: LangGraph and PydanticAI are the current defaults for stateful agent development. These frameworks support multi step execution, state persistence, and coordination between multiple agents. They also make it possible for agents to retain context across sessions and reliably manage complex workflows in production environments.

Infrastructure, Cloud, and Deployment Environments: Agents are deployed across Amazon Web Services, Google Cloud Platform, Microsoft Azure, and hybrid environments, depending on where the client’s systems and data already reside. Containerized deployments allow production workloads to scale without requiring clients to manage infrastructure directly.

What to Look for in an AI Agents Development Partner

Evaluating a custom AI development partner requires different criteria than evaluating a software vendor. The following dimensions are the ones that actually determine whether the engagement produces working production agents.

1. Full Stack AI Expertise Beyond Prompting

A partner whose capability stops at prompt engineering and API calls cannot build production reliable agents.

Architecture design, integration engineering, orchestration logic, and MLOps determine whether an agent performs consistently at 100 queries per day or 10,000, and whether it recovers when a dependency fails rather than silently degrading.

Domain Expertise to Drive Real Business Impact

Technical capability without industry context produces agents that work in demos and underperform in production. The partner needs to understand what a correct outcome looks like in your operating environment: what the edge cases mean, what the exceptions cost, and where a wrong answer causes downstream problems rather than just a minor inconvenience.

Transparent and Collaborative Delivery Approach

AI development that happens in a black box carries specific production risk: you can receive an agent that behaves well in controlled testing and behaves unpredictably when real usage patterns differ from what was anticipated. Iterative demos, shared performance metrics, and clear documentation of the agent's scope and limitations are not optional features of a trustworthy engagement. They are the minimum standard.

Scoping That Follows Discovery

A partner who publishes a fixed tier menu before seeing your workflow is anchoring scope before an engineer has evaluated the problem. That is a misalignment signal, not a quality signal. Credible AI development firms scope engagements from the variables found during discovery, not from a pre priced menu. You should know the recommended scope and the cost before the first development sprint starts, and that recommendation should follow from what was actually found during assessment, not from a table that existed before the conversation began.

Build Times That Match Business Urgency

A six month discovery engagement before development begins is misaligned with the pace at which the problems these agents solve are costing you money. Leanware's four to ten week build to production timeline reflects a productized delivery model with clear scope gates and a team that has built similar agents before, not a shortcut that trades quality for speed.

Final Thoughts

The companies that get the most out of AI agents are the ones that started with a workflow they could measure, proved the math at month four, and then expanded from a known baseline. The platform graduate problem, where 70% of the workflow is automated and the remaining 30% is where your business actually lives, does not get solved by trying a different platform. It gets solved by starting from your workflow rather than from what a platform was built to handle.

The development partner also matters. Building the system is only one part of the process. Long term value depends on how well the agent is maintained, monitored, and refined in production. Start with an AI ROI Assessment. $4,500. Two weeks. 100% credited toward your build within 30 days.

Connect with Leanware to evaluate where AI agents can create the highest operational impact in your business. The AI ROI Assessment helps validate the use case, scope the workflow, and estimate the expected return before development begins.

Frequently Asked Questions

We'll just use ChatGPT for this, why do we need custom development?

ChatGPT and similar interfaces are well suited for ad hoc tasks where a human reviews and directs each output. Custom AI agents are for workflows that need to run autonomously, at volume, connected to your systems, and reliably over weeks and months.

A ChatGPT prompt does not follow up with 200 leads in your CRM, route exceptions in your AP workflow, or monitor production alerts and open tickets without someone actively managing the session. Custom development builds the orchestration, integration, and reliability layer that turns a capable model into something that operates as part of your business processes without ongoing manual direction.

AI hallucinates, how do I know the agent won't make things up?

Hallucination is a real failure mode, and retrieval-augmented generation is the primary engineering control for it in production systems. By grounding agent outputs in your actual documents, databases, and structured data rather than model memory, RAG substantially narrows the surface area where confabulation can occur.

Beyond RAG, production agents include output validation layers, confidence thresholds, and human review gates at defined decision points. The design goal is not zero hallucination risk in the abstract; it is a system where failures are caught before they produce operational harm.

What if we become too dependent on your infrastructure and can't leave?

Vendor lock-in is a legitimate concern with managed AI services. Leanware mitigates it architecturally by building on open orchestration frameworks, specifically LangGraph and Pydantic AI, rather than proprietary platforms.

System architecture is documented throughout the engagement, and all agent configurations are built on portable, open components. The specifics of data portability and exit terms are addressed during the engagement, not assumed from a standard template.

We tried RPA and it kept breaking, why would AI agents be different?

RPA breaks on input variation because it pattern-matches against fixed templates. When a form layout changes, an API response shifts structure, or a process step introduces a new exception type, a rule update is required. AI agents reason about inputs rather than matching them, so normal operational variance is handled rather than rejected.

The failure mode for AI agents is different: they require refinement as conditions shift over time. But they do not break on the kind of routine variation that makes RPA fragile in real-world deployments.

We already tried Lindy, Avoca, Compass AI, or Microsoft Copilot and it didn't fully work, why would custom development be different?

SaaS AI platforms are optimized for the 80% of use cases that can be standardized. They handle common workflows well and hit a ceiling where your specific business logic, integration requirements, or exception handling is too specific for a configurable product to address.

If you have already worked through a platform implementation and found that the remaining edge cases are where the operational value actually lives, custom development begins from your actual workflow rather than from what the platform was built to handle. That is a structural difference in the starting point, and it is what produces different outcomes.

.webp)