LangFlow Agents: Build & Deploy AI Agents Easily

LangFlow agents extend what language models can do. Instead of just generating text responses, agents use tools, access external data, and take actions autonomously. They reason about tasks, decide which tools to use, and chain operations together to accomplish goals.

Let’s break down how you can build functional agents in LangFlow, starting with the fundamentals and moving all the way to deployment.

What are Agents in LangFlow?

An agent in LangFlow acts as an intelligent coordinator. It receives input, uses a language model to reason about what actions to take, executes those actions through connected tools, and generates a response based on the results.

LangFlow provides an Agent component that handles orchestration complexity. You configure the LLM, attach tools, set instructions, and the component manages the reasoning loop.

How Agents Differ from Standalone LLMs

A standalone LLM receives a prompt and returns text. It cannot access current information, interact with external systems, or perform actions beyond generating responses.

Agents use the LLM as a reasoning engine while connecting to tools that extend capabilities. An agent can search the web, query databases, read files, call APIs, or execute code. The LLM decides which tool fits each request and interprets the results.

Ask a standalone LLM for today's weather and it cannot help. An agent with a weather tool retrieves the actual forecast. Ask about your company's sales data, and the agent queries your database directly.

Core Components of a LangFlow Agent

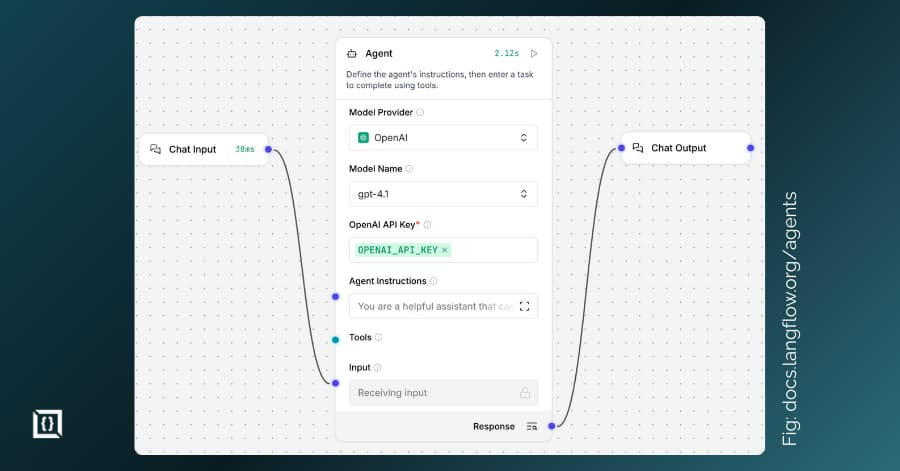

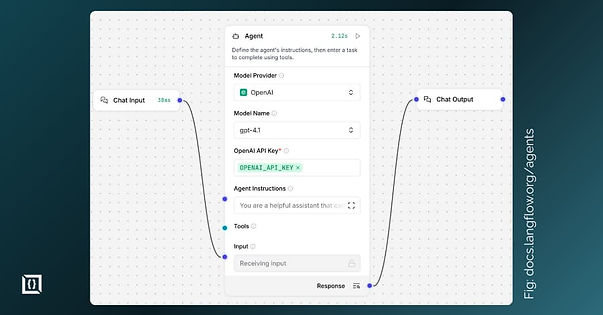

The Agent component serves as the central coordinator. It connects to an LLM provider (OpenAI, Anthropic, Google, or Ollama for local models) and manages tool orchestration. The component handles the reasoning loop that determines which tools to call, in what order, and how to interpret results.

Tools are functions the agent can call. LangFlow treats any component as a potential tool. Built-in options include calculators, URL fetchers, and search components. You can create custom tools in Python or connect MCP servers that expose external functionality.

Chat Input and Output components handle user interaction. Input receives queries from users or applications. Output displays the agent's responses. Memory enables conversation continuity through stored chat history, allowing follow-up questions that reference earlier exchanges. Instructions shape agent behavior through system prompts that define tone, approach, and constraints.

How to Create Your First LangFlow Agent

Choosing the Right Model

LangFlow supports models from OpenAI, Anthropic, Google, Mistral, and local options through Ollama.

OpenAI's GPT-4 handles complex reasoning well, but costs more. GPT-3.5-turbo works for simpler tasks at lower cost. Anthropic's Claude excels at nuanced instructions. For sensitive data, Ollama runs open-source models locally.

Using the Agent Component

Start with the Simple Agent template. Click New Flow and select it. This creates a working agent with Chat Input, Agent component, and Chat Output connected.

Configure the Agent by selecting your model provider and entering your API key. Add Agent Instructions to customize behavior, like: "You are a helpful assistant that provides concise, accurate answers."

Connecting Tools and Flows

Tools give agents capabilities beyond text generation. The default template includes Calculator and URL tools. Add more by dragging components and connecting them to the Agent's Tools port.

To use any component as a tool, enable Tool Mode in its header menu. This exposes a Tool output you can connect to the agent.

Test in the Playground. Ask questions requiring the connected tools and watch how the agent decides to use them.

Configuring Tools for Agents

What Constitutes a Tool in LangFlow

A tool is any function wrapped with a common interface. Each tool has a name, description, and defined inputs/outputs. The description tells the agent what the tool does, helping it decide when to use it.

Built-in tools include calculators, URL fetchers, Python interpreters, and search components.

Attaching and Editing Tools for Agents

Drag a tool component onto your canvas, enable Tool Mode, and connect its Tool output to the Agent's Tools input. You can attach multiple tools to one agent.

Click Edit Tool Actions to see available functions. Enable or disable specific actions and edit descriptions to help the agent understand usage.

Multi-Agent Flows: Using an Agent as a Tool

Agents can call other agents. Create a specialized sub-agent, enable Tool Mode on it, and connect to your primary agent's Tools port.

Your main agent can then delegate tasks. It handles general queries directly, but routes specialized requests to the sub-agent.

Deploying and Using Agents in Real-World Applications

Use Cases: Customer Support, Automation, Data Retrieval

Customer support agents connect to knowledge bases and ticketing systems. When users ask questions, the agent searches documentation, retrieves relevant answers, and creates support tickets when issues require human follow-up. These agents reduce response times and handle common queries without staff involvement.

Automation agents handle repetitive workflows by connecting to email APIs, calendar services, or internal tools. They read incoming requests, determine appropriate actions, and execute them autonomously. Examples include processing invoice approvals, scheduling meetings based on email requests, or updating CRM records.

Data retrieval agents query databases and APIs on demand. Users ask questions in natural language, the agent translates to appropriate database queries, executes them, and presents results in readable format. This pattern works well for business intelligence, report generation, and ad-hoc analysis.

Running Locally Versus Cloud Deployment

Local deployment works for development and sensitive data. Data stays within your control.

Cloud deployment provides accessibility and scalability. Use Docker:

docker run -p 7860:7860 langflowai/langflow:latest

For production, Kubernetes with LangFlow's Helm charts provides horizontal scaling and security features. FlightControl, Fly.io, Render, and Hetzner all work as hosting platforms.

Monitoring, Scaling and Best Practices

LangFlow integrates with LangSmith and Langfuse for observability. Track execution, tool calls, and response times.

Scale by running multiple replicas behind a load balancer. Kubernetes HPA scales based on resource usage. Log which tools get called, where errors occur, and request durations.

Advanced Topics & Pro Tips

Leveraging the MCP Tools Component

Model Context Protocol (MCP) is an open standard from Anthropic for connecting AI applications to external tools and data sources. LangFlow functions as both MCP client and server.

The MCP Tools component connects to MCP servers and exposes their functions to your agents. Thousands of MCP servers exist for different services: databases, file systems, APIs, and specialized tools. Add the component, configure the server connection via Stdio or SSE mode, and connect to your Agent's Tools port. The agent can then call any function that server provides.

LangFlow also runs as an MCP server. Your flows become tools that external clients like Cursor or Claude Desktop can call. Each project exposes its flows at the /api/v1/mcp/sse endpoint. Name and describe your flows clearly, since these become the tool descriptions that other agents use for decision-making.

Custom Components and Integrations

Write custom components in Python when built-ins do not fit. Click the code button on any component to view its implementation.

LangFlow exposes every flow as a REST API endpoint. Call agents programmatically from any application. Export flows as JSON for version control.

Troubleshooting Common Issues with Agents

Token limits cause failures when conversations grow long. The LLM context window fills with chat history and tool responses. Reduce the Number of Chat History Messages setting in the Agent component. For extended conversations, implement summarization of earlier exchanges.

Tool failures stem from incorrect configurations. Test tools individually before connecting to agents. Verify API keys are valid and have correct permissions. Check that tool descriptions accurately reflect what the tool does.

If agents misuse tools or call wrong ones, edit action descriptions to clarify purpose. Disable unnecessary actions that confuse tool selection. Clear descriptions work better: "Fetches HTML content from a URL" beats "Gets web stuff."

Chain errors occur when agents cannot interpret tool outputs. Ensure outputs match expected formats. Add error handling in custom components to return meaningful messages when operations fail.

Next Steps

LangFlow agents combine LLM reasoning with tool execution for autonomous task completion. The Agent component orchestrates this through a visual interface.

Building agents starts with selecting a model, configuring instructions, and attaching tools. Any component becomes a tool by enabling Tool Mode.

Deployment ranges from local development to Kubernetes. MCP integration extends capabilities through standardized protocols.

You can also connect with us if you want consultation and help to build, refine, or troubleshoot LangFlow agents in real projects.

Frequently Asked Questions

How much does LangFlow cost for production agent deployments?

LangFlow is open source with no licensing fees. Your costs come from infrastructure (servers, databases) and external API calls to providers like OpenAI or Anthropic. Self-hosting on your own hardware eliminates platform costs entirely. Cloud deployments add hosting fees based on your provider and resource allocation. For high-volume deployments, the primary expense is typically LLM API usage rather than LangFlow infrastructure.

How do I migrate agents from AutoGen, CrewAI, or LangChain to LangFlow?

LangFlow builds on LangChain, so concepts transfer directly. Export your existing agent logic and recreate it visually. Tools map to components with Tool Mode enabled. Memory configurations exist in Agent component settings. Multi-agent patterns from CrewAI translate to connecting multiple Agent components. Start by rebuilding one agent, verify functionality, then migrate others incrementally. You can also import LangChain code directly into custom components.

What are the performance benchmarks for LangFlow agents?

Performance depends primarily on your LLM choice and tool complexity. LangFlow adds minimal overhead to API calls. Measure latency by timing requests in your specific setup. Monitor with LangSmith or Langfuse to identify bottlenecks. Most latency comes from LLM inference and external tool calls rather than LangFlow processing. Typical agent responses complete within 2-10 seconds, depending on model and tool chain complexity.

Which API integrations work with LangFlow agents out-of-the-box?

LangFlow includes components for search providers (Google, Tavily, SerpAPI), databases (Astra DB, PostgreSQL), file operations, web fetching, and code execution. MCP support adds thousands more integrations through available servers. Connect any REST API using the API Request component or build custom components for specific services. The component library expands regularly with community contributions.

Can LangFlow agents maintain conversation state across sessions?

Yes. Agents store chat history grouped by session ID. Use custom session IDs to segregate conversations for different users or applications. By default, history uses LangFlow's internal storage. For production, configure PostgreSQL to persist state across restarts and scale across multiple instances. You can also implement custom memory backends for specialized requirements.