How to Build an MVP Using AI: Step‑by‑Step Guide

- Jarvy Sanchez

- Aug 15, 2025

- 13 min read

Updated: Aug 19, 2025

Product development isn’t what it used to be. Projects that once devoured months of your life (and most of your budget) can now come together in days, with the right AI sidekicks. For founders and small business owners, this isn’t just about working smarter, it’s about testing and validating ideas at warp speed.

In this guide, we’ll take you from “I have an idea!” to “It’s live!” using AI to strip away the slow, expensive parts of building an MVP. Whether you’re allergic to code or just want to fast-track your launch, you’ll get the practical playbook for turning your concept into a test-ready product without draining your wallet or your sanity.

1. What Is an AI‑Powered Minimum Viable Product (MVP)?

A Minimum Viable Product (MVP) is the leanest version of your product that still delivers real value and gathers useful feedback. The goal? Test your core idea with minimal time, money, and effort.

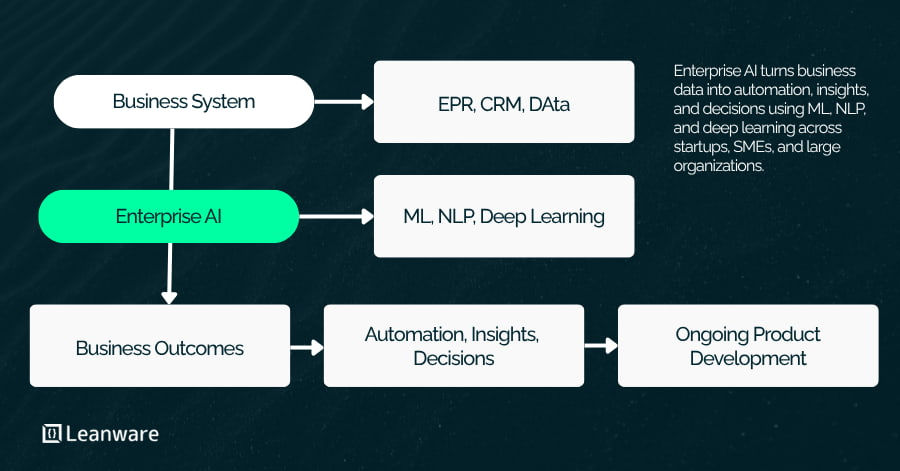

An AI-powered MVP takes that idea and hits the turbo button. It’s not just about building products that use AI, it’s about using AI to build faster, smarter, and cheaper. From brainstorming and market research to prototyping, coding, testing, and feedback analysis, AI can trim timelines, cut costs, and boost decision-making at every step.

For non-technical founders, this is a game changer: AI becomes your secret team of experts, helping you do in days what might otherwise take months (and a small army of specialists).

2. Why Build Your MVP with AI?

AI in MVP development isn’t just the latest buzz, it’s quickly becoming a survival skill. Early-stage companies that know how to wield AI can validate ideas faster, pivot quicker, and save their resources for scaling what actually works.

Traditional MVP cycles often drag through endless planning, design, coding, and testing. AI changes the game, automating grunt work, spitting out code snippets, generating design mockups, and even running market research and competitive analysis for you. In markets where speed decides the winners, AI can be the edge that gets you there first.

2.2 Potential Drawbacks and Trade‑Offs

Quality and Consistency Issues: AI-generated code and designs may lack the refinement and edge-case handling that experienced professionals provide. While functional for MVPs, you'll likely need human oversight and eventual refactoring for production-ready products.

AI Hallucinations and Errors: AI tools sometimes generate plausible-sounding but incorrect information or code. This requires careful validation and testing, especially for critical functionality or market research.

Limited Customization: AI tools often work best with common patterns and established approaches. If your MVP requires highly specialized or innovative solutions, you may find AI assistance less helpful and need traditional development approaches.

Dependency Risks: Relying heavily on specific AI tools creates potential vulnerabilities. Tool availability, pricing changes, or capability limitations could impact your development process.

The "Black Box" Problem: Understanding why AI made certain recommendations or generated specific code can be challenging. This opacity can make debugging and future modifications more difficult.

Despite these challenges, most founders find the advantages significantly outweigh the drawbacks, especially during the early validation phase when speed and cost efficiency are paramount.

3. How to Build an MVP Using AI – Phase Overview

Building an AI-powered MVP follows a structured approach that leverages artificial intelligence at each critical stage. This process transforms the traditional development cycle from a linear, time-intensive journey into an agile, AI-augmented sprint from idea to launch.

The key to success lies in understanding where AI tools provide the most value and how to combine them effectively. Rather than replacing human judgment, AI amplifies your decision-making capabilities and automates routine tasks, allowing you to focus on strategic choices and user-centered design decisions.

3.1 Phase 1: Brainstorm Ideas & Research with AI

The foundation of any successful MVP is thorough market research and validated assumptions. AI tools like ChatGPT, Claude, Perplexity, and Gemini have revolutionized this process by providing instant access to comprehensive market analysis and competitive intelligence.

Start by using conversational AI to explore your target market. Ask specific questions about market size, customer pain points, existing solutions, and potential opportunities. For example, prompt an AI with: "Analyze the market for productivity tools targeting remote software developers. What are the main pain points, who are the key competitors, and what gaps exist in current solutions?"

Generate detailed user personas by describing your assumptions and asking AI to expand them with realistic details, behaviors, and motivations. AI can help you think through edge cases and user scenarios you might not have considered initially.

Use AI for competitive analysis by asking it to identify direct and indirect competitors, analyze their strengths and weaknesses, and suggest differentiation opportunities. While you should verify this information independently, AI provides an excellent starting point for comprehensive market research.

Validate your assumptions by presenting them to AI and asking for potential counterarguments or overlooked factors. This red-team approach helps identify weaknesses in your thinking before you invest significant resources.

3.2 Phase 2: Create Digital Mockups & Storyboards

Visual design and user experience planning become dramatically faster with AI-powered design tools. Platforms like Uizard and Galileo AI can transform simple sketches or text descriptions into polished wireframes and interactive prototypes.

Begin by describing your desired user interface in natural language. Modern AI design tools can interpret descriptions like "create a clean, modern dashboard for project management with a sidebar navigation, main content area showing project cards, and a top bar with user profile and notifications" and generate multiple design variations.

Create user flow diagrams by describing the customer journey through your product. AI can help visualize complex user paths and identify potential friction points in the user experience.

Iterate rapidly on design concepts by requesting variations, different color schemes, or alternative layouts. The speed of AI-generated designs allows you to explore dozens of options quickly, helping you identify the most promising directions before investing in detailed development.

Generate consistent design systems by asking AI to create style guides, color palettes, and component libraries based on your initial designs. This ensures visual consistency across your MVP while saving significant design time.

3.3 Phase 3: Generate Code or Build Features Using AI

Code generation represents one of AI's most transformative capabilities for MVP development. Tools like GitHub Copilot, Amazon CodeWhisperer, and Tabnine can significantly accelerate the development process while maintaining code quality.

Start with backend development by describing your API requirements in plain English. AI can generate endpoint structures, database schemas, authentication logic, and basic CRUD operations. For example, you might prompt: "Create a REST API for a task management system with user authentication, project creation, and task assignment functionality."

Develop frontend components by describing the user interface elements you need. AI can generate React components, Vue.js templates, or vanilla JavaScript code based on your design mockups and functional requirements.

Integrate third-party services more quickly by asking AI to generate connection code for popular services like Stripe for payments, SendGrid for email, or Firebase for real-time data. AI often has knowledge of current API patterns and can generate working integration code.

However, remember that AI-generated code should be reviewed and tested thoroughly. While often functional, it may not follow your specific coding standards or handle edge cases appropriately. Pairing AI assistance with human oversight ensures both speed and quality.

3.4 Phase 4: Test, QA, and Feedback Loop

Quality assurance becomes more efficient and comprehensive with AI-powered testing tools. Platforms like CodiumAI and Testim can automate test creation and execution, catching bugs early in the development process.

Generate comprehensive test suites by describing your application's functionality to AI testing tools. These platforms can create unit tests, integration tests, and even user interface tests based on your code and requirements.

Automate edge case detection by asking AI to identify potential failure scenarios you might not have considered. AI excels at generating "what if" scenarios that can reveal hidden vulnerabilities in your MVP.

Implement continuous feedback loops by using AI to analyze user feedback, crash reports, and usage patterns. This analysis helps prioritize which issues to address first and which features to develop next.

Create automated monitoring and alerting systems using AI tools that can detect anomalies in user behavior, performance metrics, or error rates, allowing you to respond quickly to issues.

3.5 Phase 5: Refine, Integrate, and Launch

The final phase focuses on polishing your MVP and preparing it for real users. AI tools can assist with deployment, integration with essential third-party services, and creating supporting materials like documentation and marketing content.

Optimize performance by asking AI to review your code for potential bottlenecks and suggest improvements. Many AI tools can identify common performance issues and recommend optimizations.

Integrate essential services like payment processing (Stripe), analytics (Google Analytics), email services (SendGrid), and hosting platforms (Vercel, Netlify) using AI-generated integration code and configuration guidance.

Create user documentation and help content by describing your product features to AI writing tools. These can generate user guides, FAQ sections, and onboarding materials that help users understand and adopt your MVP.

Develop launch materials including landing pages, press releases, and social media content using AI writing and design tools. While you'll want to review and personalize this content, AI provides an excellent starting point for launch communications.

4. Top AI Tools & Solutions for MVP Development

Selecting the right AI tools can make the difference between a successful AI-powered MVP development process and a frustrating experience. The key is understanding each tool's strengths and knowing when to deploy them effectively.

4.1 AI for Planning & Research

ChatGPT excels at brainstorming, strategic thinking, and generating comprehensive analyses. Its conversational interface makes it ideal for exploring ideas, creating user personas, and thinking through complex business scenarios. Use ChatGPT when you need creative problem-solving or want to explore multiple approaches to a challenge.

Claude provides more structured, analytical responses and excels at processing large amounts of information. It's particularly useful for market research analysis, competitive intelligence, and strategic planning. Claude tends to provide more balanced, nuanced perspectives on complex business questions.

Perplexity combines AI responses with real-time web search, making it invaluable for current market research, competitor analysis, and trend identification. Use Perplexity when you need up-to-date information or want to verify AI-generated insights against current market conditions.

Each tool has distinct strengths: ChatGPT for creative exploration, Claude for analytical depth, and Perplexity for current information. Many successful founders use all three strategically throughout their research phase.

4.2 AI‑Assisted Design Tools

Uizard transforms hand-drawn sketches and wireframes into digital prototypes, making it perfect for founders who prefer to start with pen and paper. Its strength lies in bridging the gap between initial concepts and digital designs.

Galileo AI generates complete user interfaces from text descriptions, allowing non-designers to create professional-looking mockups quickly. It excels at creating consistent design systems and can generate multiple variations of the same concept.

Both tools integrate well with popular design platforms like Figma, allowing you to refine AI-generated designs with traditional design tools. The key advantage is speed—you can explore dozens of design concepts in the time it would traditionally take to create just a few.

4.3 AI‑Based Code Assistants

GitHub Copilot provides the most comprehensive code generation capabilities, supporting multiple programming languages and frameworks. It excels at generating boilerplate code, API integrations, and common programming patterns. Copilot works best when you provide clear context about what you're trying to accomplish.

Cursor offers a full IDE experience with integrated AI capabilities that go beyond simple code completion. It excels at understanding entire codebases, enabling complex refactoring, debugging assistance, and architectural discussions. Cursor's ability to work with multiple files simultaneously makes it particularly powerful for larger projects where context across the entire application matters.

Claude Code provides agentic coding capabilities directly from the command line, allowing developers to delegate entire coding tasks to Claude. It excels at autonomous problem-solving, from initial implementation to testing and debugging. Claude Code is particularly effective for developers who prefer terminal-based workflows and want an AI assistant that can work independently on well-defined coding objectives.

All three tools work best when paired with experienced developers who can review and refine the generated code. They excel at handling routine tasks, allowing human developers to focus on architecture and complex problem-solving.

4.4 AI in Testing & Quality Assurance

CodiumAI automatically generates comprehensive test suites based on your existing code, helping ensure thorough test coverage without manual test writing. It excels at identifying edge cases and generating tests for complex functions.

Testim creates and maintains automated UI tests that adapt to changes in your application. Its AI-powered maintenance reduces the traditional burden of keeping UI tests current as your product evolves.

These tools are particularly valuable for small teams who might not have dedicated QA resources but still need comprehensive testing to ensure MVP quality.

4.5 AI‑Enabled Low‑Code or No‑Code Platforms

Bubble combines traditional no-code development with AI-powered features for design and workflow creation. It's ideal for founders without coding backgrounds who want to build sophisticated web applications.

FlutterFlow offers AI-assisted mobile app development with visual programming interfaces. It generates Flutter code automatically, allowing for both rapid development and the ability to export and customize the underlying code.

Both platforms have limitations compared to custom development—they may not support highly specialized features or complex integrations. However, they're excellent for validating product concepts quickly and can often handle the functionality needed for effective MVPs.

5. A Real-World Case Study: ZoomInfo's GitHub Copilot Enterprise Deployment

Source: Peng, Sida, et al. "Experience with GitHub Copilot for Developer Productivity at ZoomInfo." arXiv preprint arXiv:2501.13282 (2025). [Available at: https://arxiv.org/html/2501.13282]

To demonstrate the power of AI-assisted development at enterprise scale, let's examine how ZoomInfo, a leading Go-To-Market Intelligence Platform, systematically evaluated and deployed GitHub Copilot across their engineering organization of over 400 developers.

The Challenge

ZoomInfo needed to enhance developer productivity across a complex platform managing hundreds of millions of business profiles and processing billions of daily events. The engineering team, distributed globally across the US, Europe, India, and Israel, works with diverse technology stacks including TypeScript, Python, Java, and JavaScript across thousands of code repositories.

1. Initial Assessment Phase (July 10-17, 2023)

ZoomInfo began with a small-scale qualitative assessment involving five engineers to evaluate GitHub Copilot's potential impact on their development workflows.

Key Results:

Overall experience rating: 8.8 out of 10

Productivity improvement rating: 8.6 out of 10

Code standards alignment: All participants reported good to excellent alignment

What Worked:

Strong adaptation to existing codebase patterns and conventions

Particularly effective for unit test generation and boilerplate code

No reported negative impact on code quality

Challenges Identified:

Need for modification of suggested code (60% of participants)

Limited visibility across multiple projects

Potential over-reliance on automated suggestions

2. Trial Recruitment Phase (July 17 - August 14, 2023)

The company implemented a structured recruitment process using stratified voluntary sampling to ensure representative participation across technical specializations, experience levels, and geographical locations.

Trial Setup:

126 engineers participated (32% of total developers)

Comprehensive compliance requirements including security training

Documentation of AI governance framework and data ethics guidelines

Structured application and prerequisite verification process

3. Two-Week Controlled Trial (August 15-29, 2023)

Trial Results (72 responses, 57% response rate):

Mean satisfaction rating: 8.0 out of 10

Mean productivity improvement rating: 7.6 out of 10

Mean security vulnerability assessment confidence: 8.2 out of 10

Key Findings:

Developers reported time savings for generating boilerplate code, unit tests, and documentation

Majority reported good to excellent alignment with coding standards

No participants reported decline in code quality

Tool was useful across various languages and tech stacks with some inconsistencies

4. Full Rollout Phase (September 7, 2023)

Following successful trial analysis, ZoomInfo initiated enterprise-wide deployment using a ServiceNow workflow for measured license distribution and compliance tracking.

5. Production Results & Impact

Quantitative Results (26 days of data, Nov-Dec 2024):

Overall Performance:

Average acceptance rate: 33% for suggestions, 20% for lines of code

Daily averages: ~6,500 suggestions, ~15,000 lines suggested

Total impact: Hundreds of thousands of lines of AI-generated code in production

Language-Specific Performance:

Top performers: TypeScript, Java, Python, JavaScript (30%+ acceptance rates)

Lower performers: HTML, CSS, JSON, SQL (14-32% acceptance rates)

Most suggestions: TypeScript led with highest volume and acceptance

Editor Performance:

JetBrains: More widespread usage, 30% suggestion acceptance

VS Code: Similar suggestion acceptance, 50% higher line acceptance rate

Qualitative Results:

Developer Satisfaction (DevSat Score): 72% - highest among all development tools

Productivity Impact:

90% of respondents reported time reduction with median savings of 20%

63% of respondents completed more tasks per sprint

77% of respondents reported improved work quality

Sample Developer Feedback:

Positive: "Github Copilot has been a great productivity tool after I learned how to leverage it for certain things."

Neutral: "Github Copilot is a hit or miss with correct code in VSCode... it's usually correct and saves time but it's still one line at a time."

Areas for improvement: Inconsistent performance on domain-specific tasks requiring additional oversight

6. Key Learnings & Limitations

Observed Limitations:

Contextual Understanding: Struggles with domain-specific logic

Security Concerns: Requires additional vetting of auto-generated code

Creativity Limitations: Generates predictable, less innovative solutions

Success Factors:

Systematic phased approach with proper governance framework

Comprehensive security training and compliance requirements

Balanced measurement using both quantitative metrics and qualitative feedback

Enterprise-grade implementation with proper license management and tracking

7. Aftermath & Business Impact

Six months post-deployment:

Consistent 33% acceptance rate across programming languages

Hundreds of thousands of lines of AI-generated code in production

High developer satisfaction maintained at 72%

20% median time savings reported across development tasks

No degradation in code quality or security standards

Key Takeaways

What AI Excelled At:

Boilerplate code generation and unit test creation

Documentation and commenting

Pattern recognition and routine implementation tasks

Learning curve reduction for new technologies

What Still Required Human Expertise:

Strategic decision-making and architecture design

Domain-specific logic implementation

Security considerations and compliance requirements

Code quality assurance and peer review

Creative problem-solving for complex challenges

Cost-Benefit Analysis:

ROI achieved through: 20% time savings across 400+ developers

Minimal additional costs: Standard GitHub Copilot Business licensing

Risk mitigation: Comprehensive training and governance framework prevented security and quality issues

This case study demonstrates that with proper planning, governance, and realistic expectations, AI-assisted development tools can provide significant productivity gains in enterprise environments while maintaining code quality and security standards.

6. FAQs

How long does it take to build an MVP with AI? With AI assistance, a functional MVP can typically be built in 1-4 weeks, compared to 2-6 months using traditional development methods. Simple concepts might be prototyped in days, while more complex products require several weeks. The key variables are product complexity, team experience with AI tools, and the level of polish required for initial user testing.

What are the best AI tools for startups? The most valuable AI tools for startups include ChatGPT or Claude for planning and research, GitHub Copilot for code generation, Galileo AI or Uizard for design, and CodiumAI for testing. The specific best tools depend on your team's technical skills and product requirements. Start with conversational AI for planning, then add specialized tools as needed.

Is AI good enough to write production code? AI can generate functional code quickly, but it typically requires human review and refinement for production use. AI excels at boilerplate code, standard implementations, and routine programming tasks. However, complex business logic, security considerations, and performance optimization usually need human expertise. Think of AI as a highly capable coding assistant rather than a replacement for developers.

Can I build an MVP with no coding skills? Yes, using no-code platforms like Bubble or FlutterFlow combined with AI assistance, non-technical founders can build functional MVPs. However, you may be limited in customization options and complex integrations. For simple web applications and standard mobile apps, no-code plus AI can be very effective. More complex products may require partnering with developers.

How much does it cost to build an AI-powered MVP? AI tool subscriptions typically cost $20-100 per month during MVP development. Additional costs include hosting ($10-50/month), third-party service integrations ($0-200/month), and potential contractor fees if you need specialized help. Total costs often range from $500-5,000, compared to $25,000-100,000 for traditional MVP development.

Should my MVP actually use AI, or just be built with AI tools? These are separate decisions. Your MVP might use AI features if they solve core customer problems, but you can also build non-AI products using AI development tools. Focus first on what your customers need. If AI features provide clear value, include them. If not, you can still benefit from AI-assisted development tools to build faster and cheaper.

What's the biggest mistake founders make with AI-powered MVPs? The most common mistake is over-relying on AI without sufficient human oversight, leading to products that work in demos but fail in real-world use. Other mistakes include choosing the wrong AI tools for their use case, not validating AI-generated market research with real customers, and assuming AI can handle complex business logic without human refinement. Success requires balancing AI efficiency with human judgment.

.webp)